@Codeberg Great job!

@Codeberg Really need to sue them for a denial of service attack, get them banned from touching a computer for 20 year.

@Codeberg I've been moving my stuff to Codeberg. Glad to see you have a presence on Mastodon! Thanks for being there.

@Codeberg

Keep up the good work!

@Codeberg can you identify the owners? I wonder if they are famous companies or someone else (not asking for names, just wondering).

Are you guys using traffic shaping and queue management at all? For example putting something like QFQ qdisc on your routers and then marking packets from spammy sources as low-priority and putting them into a low priority queue can be a huge boost in responsiveness for your real customers.

Spammy sources could be those that open new connections too often, transfer too many bytes, or have too many open active connections. All of those kinds of things can be accounted in nftables.

Thank You For Your Service. ( I moved to Codeberg, like, yesterday, and signed up a recurring donation )

gzip bomb when?

@Codeberg what if the new captcha was get a bug fix PR merged? That'd keep them robits out.

@Codeberg could just setup a few traps that crash the AI crawlers or something. This is going to get really annoying and hopefully these bastards don't interfer with some of my work in the long run with what they've been doing on the internet. Scraping is already largely frowned upon so these pos are just making it worse.

😲🤬 re: what's happened to @Codeberg today.

The AI ballyhoo *is* a real DDoS against one of the few code hosting sites that takes a stand against slurping #FOSS code into LLM training sets — in violation of #copyleft.

Deregulation/lack-of-regulation will bring more of this. ∃ plenty of blame to go around, but #Microsoft & #GitHub deserve the bulk of it; they trailblazed the idea that FOSS code-hosting sites are lucrative targets.

It seems like the AI crawlers learned how to solve the Anubis challenges. Anubis is a tool hosted on our infrastructure that requires browsers to do some heavy computation before accessing Codeberg again. It really saved us tons of nerves over the past months, because it saved us from manually maintaining blocklists to having a working detection for "real browsers" and "AI crawlers".

However, we can confirm that at least Huawei networks now send the challenge responses and they actually do seem to take a few seconds to actually compute the answers. It looks plausible, so we assume that AI crawlers leveled up their computing power to emulate more of real browser behaviour to bypass the diversity of challenges that platform enabled to avoid the bot army.

We have a list of explicitly blocked IP ranges. However, a configuration oversight on our part only blocked these ranges on the "normal" routes. The "anubis-protected" routes didn't consider the challenge. It was not a problem while Anubis also protected from the crawlers on the other routes.

However, now that they managed to break through Anubis, there was nothing stopping these armies.

It took us a while to identify and fix the config issue, but we're safe again (for now).

@Codeberg Thanks for fighting the good fight!

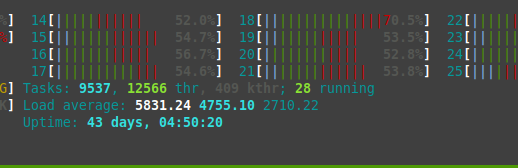

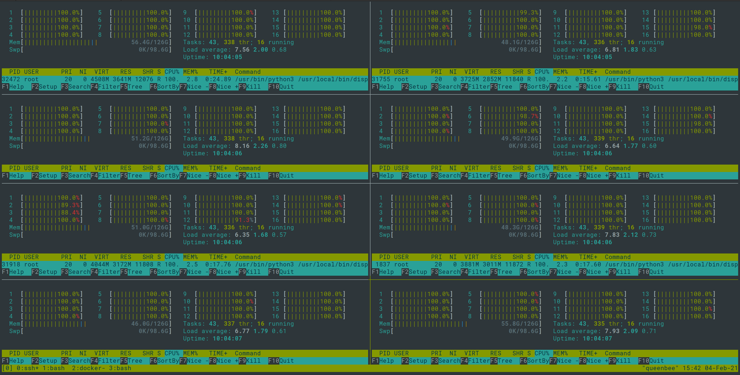

For the load average auction, we offer these numbers from one of our physical servers. Who can offer more?

(It was not the "wildest" moment, but the only for which we have a screenshot)

@Codeberg wow

@Codeberg In the days of single CPU servers (early 90s?) and an interesting filesystem problem, I think I may have seen ~400 at a client site!

@Codeberg ouch. This remains a cat-and-mouse game.

At least having them solve the Anubis challenge does cost them extra resources, but if they can do that at scale, it doesn't promise a lot of good.

@Codeberg wow - that looks scary. Thanks for all your work ❤️

@Codeberg I'm really sorry there isn't a good legal avenue to stave off the abuse. Horrifying.

@Codeberg I really wish you contacted me at all about this before going public.

@cadey I'm sorry if this gave you any unwanted or negative attention. I consider crawlers emulating more of real browser features to bypass protections of websites an inevitable future, and today at least one big crawler seems to have started doing so. ~f

@Codeberg Can we continue this conversation over email after my panic subsides? [email protected].

@Codeberg yeowsa. this feels like an arms race that is going to get harder :(

@Codeberg This is a great number, but I have seen higher in my career. Unfortunately I either have no screenshots or lost what I already have.

5831.24 is pretty good though. Congrats for hitting, hope your head doesn't hurt. :D

@Codeberg

Hw much RAM do you have in your Machines?

@lindesbs 160 GB apparently. Looked it up from https://codeberg.org/Codeberg-Infrastructure/meta/src/branch/main/hardware/achtermann.md. ~f

@Codeberg damn. The only time I've seen numbers like this were when a ceph server went down.

@Codeberg what is the threshold for alerting so? Grafana/Zabbix/Prometheus?

@Codeberg huh, that's a pretty kernel-heavy workload, so much red

@Codeberg omfg that load!

@Codeberg thank you for the details. Very interesting. They are worth a blog post.

@Codeberg what if you had challenges for AI to perform that made it mine bitcoin for you and you just block them at the end anyway 🤣

@Codeberg Here goes more™...

@Codeberg How much of that load was actual I/O wait?

@Codeberg Why not just to block huawei cloud asn prefixes?

It's easy to get them (e.g. from projectdiscovery)

@lenny If you read the thread, you'll notice that this is exactly what we did, except that we made a mistake. ~f

@Codeberg Great thread and explanation. Thank you.

@Codeberg so, to clarify, do you have evidence that the bots were solving Anubis challenges or not, i.e., it was due to the configuration issue? (I think it's inevitably going to happen if Anubis gets traction. I'm just curious if we're already there or not.) Thanks for your work and transparency on all this.

@zacchiro Yes, the crawlers completed the challenges. We tried to verify if they are sharing the same cookie value across machines, but that doesn't seem to be the case.

I have a follow up question, though, @Codeberg, re: @zacchiro's question. Is it *possible* that giant human farms of Anubis challenge-solvers actually did it? Or did it all happen so fast that there is no way it could be that?

#Huawei surely could fund such a farm and the routing software needed to get the challenge to the human and back to the bot quickly enough that it might *seem* the bot did it.

@bkuhn

Anubis challenges are not solved by humans. It's not like a captcha. It's a challenge that the browser computes, based on the assumption that crawlers don't run real browsers for performance reasons and only implement simpler crawlers.

So at least one crawler now seems to emulate enough browser behaviour to make it pass the anubis challenge. ~f

@zacchiro

@Codeberg Is your list shared? It would be good to have a list of carefully curated AI-bot block lists.

@Codeberg are the ip blocklists public?

@nemo Currently not. We wanted to investigate the legal situation with regards to sharing such lists. They could currently contain individual's IP addresses and likely need to be cleaned up first. ~f

@Codeberg no worries, ty for fighting the good fight o7

@Codeberg Was the solution to increase the proof-of-work difficulty?

@baltakatei No. We fixed our config. Now we're blocking the offending IP ranges directly. ~f

@Codeberg Damn it. I hate AI !

@Codeberg have you tried filing a criminal complaint against the "attacker" because basically it's a breach of ToS and a DoS, right? So it might qualify for a violation of § 303b StGB (German criminal code). I mean, I am no lawyer, but at least it's worth the try?

@Codeberg How much were they slowed down by actually solving the challenges? I was under the impression that the proof of work was the primary intent of Anubis, and the fact that most crawlers just bombed out and didn't even attempt them in the first place was a bonus.

@Codeberg It makes me wonder: there is a public curated IP blocklist somewhere that we can all use ? I searched a bit, I found only weak robot.txt solutions based on User Agent.

@Codeberg Seem a bad mouse and cat game, glad that you could stay at the top of it (proves that human can still win). Jesus christ, those big tech compagnies should be held responsable for that shit and pay billions in fine. Maybe then they would think of stopping that insanity.

@Codeberg instead of blocking, poisen the content for them...

@Codeberg Good luck with fighting the bots. I recently moved my OSDev project and site to Codeberg from GitHub and so far it’s been great!

Thank you for helping the open-source community!

@Codeberg this is an absurd level of waste they're introducing

Pardon my ignorance, but couldn't they just be using a headless browser, which would still do everything a regular browser does? Just recently, ChatGPT beat Cloudflare's CAPTCHA using a similar system. Is there really any way around this at all? @[email protected]

At the end of bait, you can put gzip bombs, or more complicated, multiple bait links, where multiple visits causes the IP to be temporarily nullrouted (a human may visit the bait once).

Trying to identify the scraper via fingerprinting and/or JavaScript is doomed to fail, as scrapers can use the same browsers as users (firefox+xdotool will do, but headless browsers tend to be more reliable and less resource-intenstive).

The crawler is a separate system where the gains of using even less of a browser are significant.

Basically training data scraping vs serving path, the serving path will always have more resources per request.

@danjones000 @Codeberg the way Anubis works is by making it computationally prohibitive to get through the challenge. It's still possible, but it would require a significant amount of time to do so, something that crawlers don't like doing.

@danjones000 @Codeberg the whole point is to force the scrapers to run a whole browser, because running a whole browser is significantly more expensive.

These companies are evidently willing to pay an absolutely staggering cost to do their scraping.

I wonder, are they paying with their own money, or are they “borrowing” some unsuspecting strangers' compromised computers/routers/etc to do the work?

@binaergewitter Toter der Woche: Anubis-Challenges als wirksames Mittel

@Codeberg maybe it's time to improve Anubis https://bsky.app/profile/techaro.lol

@Codeberg I observed them too about a month ago. I then sent the whole AS to Google's recaptcha and it worked (at least people who can solve recaptcha can still access our site while these bots can't).

@Codeberg boy Huawei is so nasty

I wonder who are the biggest offenders on this matter...

"AI crawlers learned how to solve the Anubis challenges"

Why does EU discuss chat control and not AI crawlers control again?

@Codeberg eBPF could be more effective and easy on the CPU, since it acts on a way lower network layer. Anubis kinda has it's limits and it's way too easy to circumvent (as you found out)

Maybe it's worth it to consider eBPF (if not already happened)

And thanks guys for your work. I'm a proud supporter and I'll continue to support your work. Companies shouldn't control the Open Source space

@Codeberg It's going to be rat race after all, I expected this to happen eventually. Surprising it took this long.

@Codeberg Perhaps it's time stop letting robots solve puzzles and instead feed them bombs. Do we know how well a ZIP bomb works on these crawlers?

@Codeberg Have you looked into serving these LLM crawlers alternative versions of the site, with poisoned data? (And rate-limiting, of course.) I know it would be additional work for you to implement this, but... it might be effective.

I'm thinking you could have a precomputed set of 1000 different poison repos that get served up randomly, each of which is a Markov-chain-scrambled version of the files in a real repo.

(I wrote https://codeberg.org/timmc/marko to do something similar to the contents of my blog posts—a Markov model on either characters or words.)

We apologize for a period of extreme slowness today. The army of AI crawlers just leveled up and hit us very badly.

The good news: We're keeping up with the additional load of new users moving to Codeberg. Welcome aboard, we're happy to have you here. After adjusting the AI crawler protections, performance significantly improved again.